Data augmentation stands as a pivotal technique in the realm of deep learning, playing an indispensable role in enhancing the performance of models. This process, which enriches training datasets without the need for collecting new data, is instrumental in addressing some of the core challenges faced by deep learning practitioners today. Through the strategic manipulation of data, models can be trained to recognize a broader array of patterns, significantly improving their accuracy and reliability across various applications. As we explore the landscape of data augmentation, we uncover its profound impact on the advancement of artificial intelligence, highlighting its versatility and critical importance in the development of robust, intelligent systems.

Contents

Understanding Data Augmentation

Data Augmentation: A Key Player in Deep Learning Success

In the world of deep learning, a technique called data augmentation is making waves for its crucial role in enhancing model performance. But what exactly is data augmentation, and why does it matter so much?

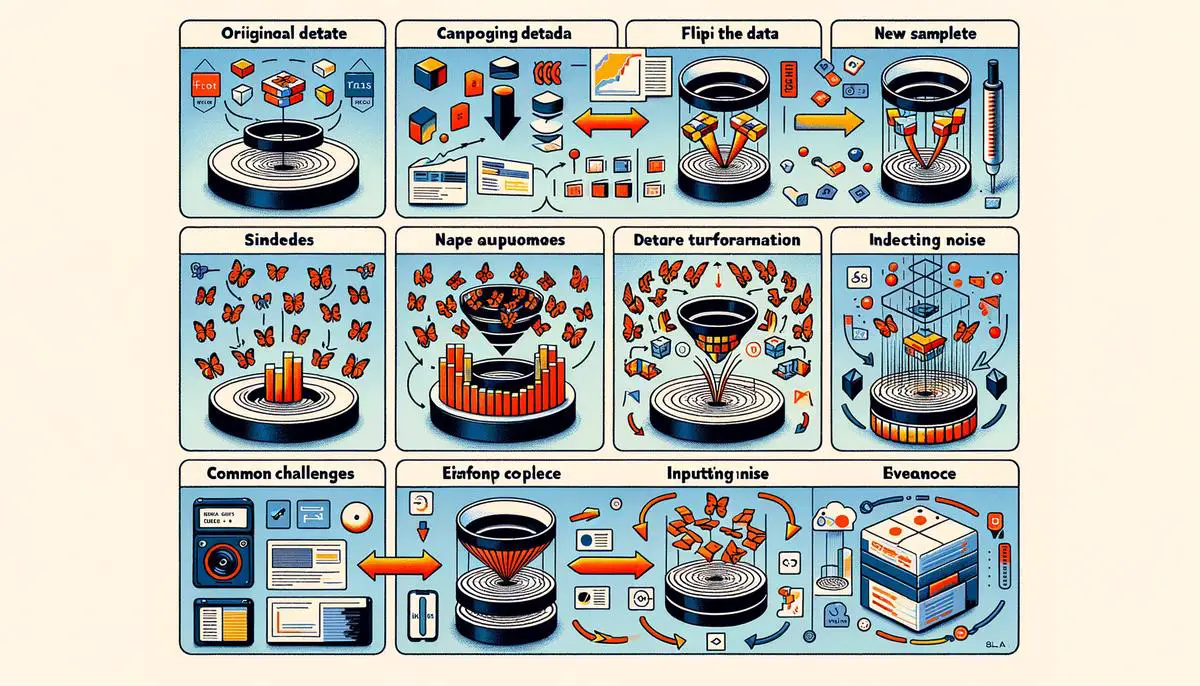

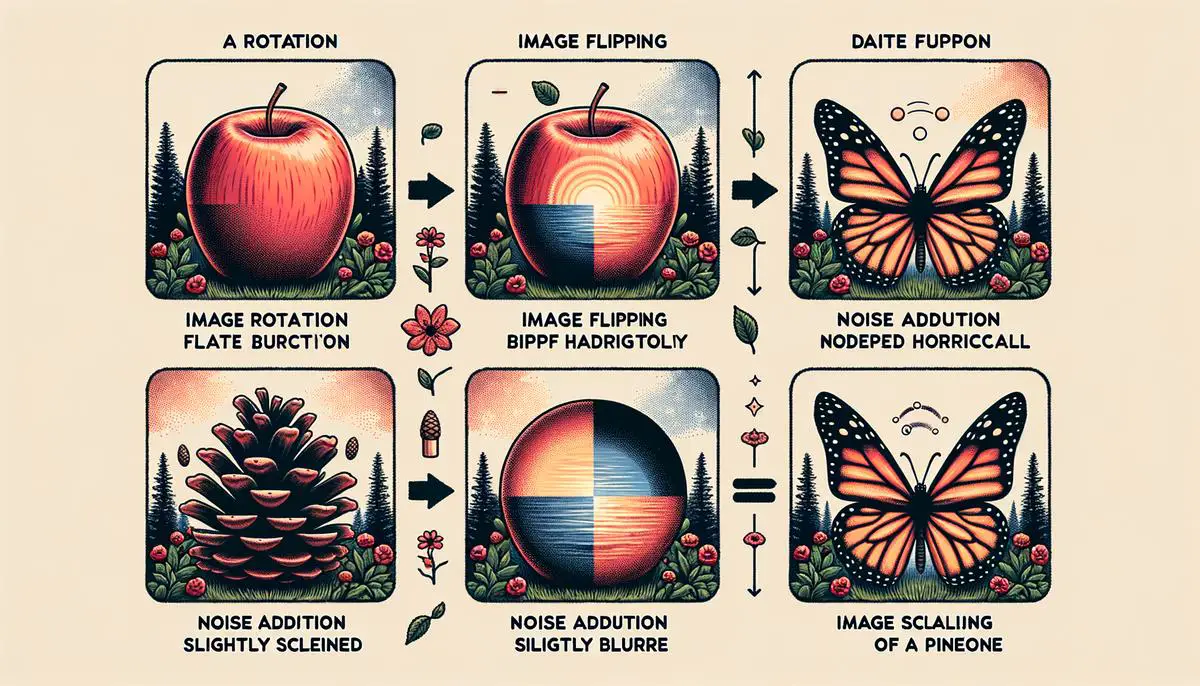

At its core, data augmentation is a strategy used to increase the diversity of data available for training models without actually collecting new data. This is done through various methods such as cropping, rotating, or flipping images in the case of visual data, or by adding noise to audio files. The idea is to transform the data in ways that maintain its original meaning but give the model more varied examples to learn from.

The need for data augmentation stems from deep learning models’ hunger for data. These models learn patterns and make predictions based on the data they’re fed during training. However, the more data they have, the better they perform. This is where data augmentation steps in, providing a cost-effective way to beef up datasets and improve model accuracy.

The critical importance of data augmentation in deep learning can’t be overstressed. It directly addresses the issue of overfitting, which occurs when a model learns the training data too well, including its noise and outliers, leading to poor performance on unseen data. By introducing more variability into the training data, models can learn more general patterns that are applicable to a wider range of inputs.

Moreover, data augmentation is especially significant in fields where data is scarce or expensive to collect, such as medical imaging. By artificially expanding the dataset, deep learning models in these areas can achieve higher accuracy and reliability, ultimately contributing to advances in diagnosis and treatment methods.

Implementing data augmentation also allows models to encounter and learn from rare or unusual data instances without the need to find or generate these cases naturally. This can be particularly useful in applications like fraud detection, where anomalous cases are critical for training but naturally infrequent.

In summary, data augmentation is a powerful tool in the deep learning arsenal, providing a straightforward yet effective means of enhancing model performance, combatting overfitting, and ensuring models are well-equipped to handle real-world variability. Its role in the continued success and advancement of deep learning technologies is undeniable, making it a foundational practice in the development of intelligent, robust models.

Techniques and Their Applications

Now, let’s delve further into the practical applications of data augmentation across various domains, showcasing the versatility and indispensability of this technique in the realm of artificial intelligence (AI) and machine learning.

In the field of autonomous driving, data augmentation takes on a pivotal role. Training an AI model to navigate the myriad situations on the road requires a vast amount of data, including varying weather conditions, time of day, and unpredictable pedestrian behaviors. Data augmentation methods like altering lighting conditions in images or simulating raindrops and fog on camera lenses can immensely prepare autonomous driving systems for real-world challenges without the prohibitive costs and risks of collecting such diverse data in the field.

Agriculture technology, another domain benefiting from data augmentation, leverages it for plant disease detection and crop health monitoring. Given the challenges in capturing the numerous disease manifestations or environmental stress conditions across different crop types, data augmentation enables the creation of extensive training datasets. Techniques like image manipulation to replicate symptoms of diseases or deficiencies help in training more robust models capable of supporting farmers in making informed decisions.

In retail and e-commerce, data augmentation assists in improving the shopping experience through better product recommendation systems and visual search capabilities. By artificially expanding the dataset of product images through rotations, zooming, or color variation, AI models can more accurately identify and suggest products that meet the diverse preferences of shoppers. This not only enhances customer satisfaction but also drives sales by making product discovery more intuitive and engaging.

Educational technology also harnesses data augmentation, particularly in personalized learning experiences. By augmenting datasets with synthetic data reflecting various learning styles and preferences, AI models can tailor educational content to fit individual student needs more precisely. This approach fosters a more inclusive and effective learning environment, accommodating the wide spectrum of learning speeds and styles present in any educational setting.

Lastly, the entertainment industry is transforming storytelling and gaming experiences through data augmentation. By generating novel content or modifying existing assets (e.g., backgrounds in animation or textures in video games), creative teams can produce more diverse and rich media experiences. This not only streamlines the production process but also enables the exploration of more complex and varied narrative landscapes, thereby captivating wider audiences.

In conclusion, data augmentation proves to be a cornerstone technique pivotal to the advancements and applications of AI across an impressive array of domains. By intelligently augmenting data, developers and researchers push the boundaries of what machine learning models can achieve, opening the door to innovations that were once deemed beyond reach. Through these practical applications, it’s evident that data augmentation is not just a tool for model improvement but a catalyst for technological progress and societal benefit.

Tools and Software for Data Augmentation

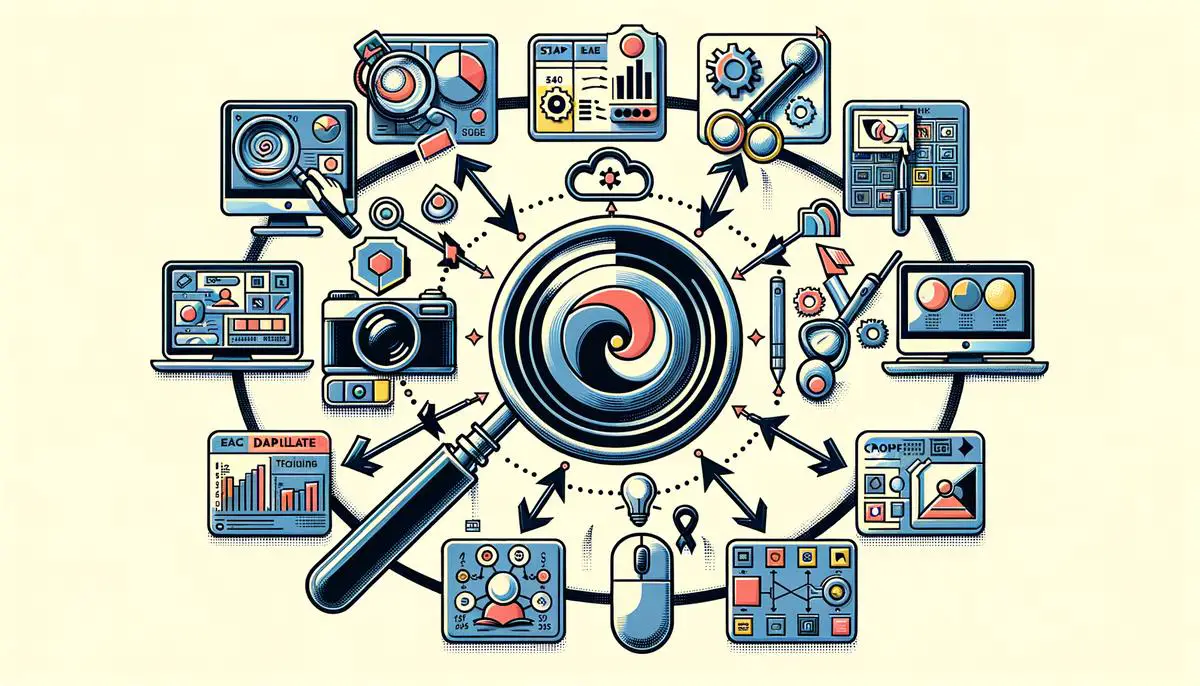

Exploring the landscape of data augmentation, a myriad of tools and software emerges, empowering developers and researchers to efficiently implement this technique in deep learning projects. These utilities range from open-source libraries to comprehensive platforms, simplifying the process of augmenting datasets, whether for image, text, audio, or video data.

One widely recognized tool in the realm of image data augmentation is Augmentor. Designed with simplicity in mind, Augmentor provides an intuitive Python library for automating image data augmentation. It enables the execution of basic transformations such as rotations, zooming, and flipping, alongside more intricate operations such as skewing and random erasing, all through easy-to-use functions.

Similarly, Albumentations stands out for its speed and support for a vast array of augmentation techniques, not just limited to the conventional ones. This Python library is especially favored for tasks demanding high-performance computing, such as training deep neural networks for computer vision. Its ability to handle not just images but masks and bounding boxes makes it a go-to for projects in segmentation and object detection.

When it comes to text data augmentation, NLPAug emerges as a beacon for NLP (Natural Language Processing) practitioners. This library dives into augmenting textual content by leveraging models like BERT, RoBERTa, and GPT-2 for contextually relevant transformations. Users can simulate real-world variations in text data by applying operations that insert, substitute, delete, or swap words and sentences, thereby enriching the dataset manifold.

For those delving into audio data augmentation, Audiomentations offers a robust solution. This Python library equips users with a wide range of augmentation choices for sound data, including noise injection, time stretching, pitch shifting, and more. Designed to enhance the diversity of audio datasets, it ensures models are well-trained to recognize sound patterns under various conditions.

Crossing into the domain of video data augmentation, VidAug is another notable mention. It caters to the specific needs of augmenting video datasets by incorporating temporal dimension transformations alongside spatial manipulations. This includes adjustments like altering playback speed, applying geometric transformations frame by frame, and integrating visual effects to simulate different lighting and weather conditions.

Diving deeper into specialized applications, DeepAugment and EvaNet offer tailored solutions for complex scenarios. DeepAugment utilizes deep learning itself to discover new augmentation strategies that can be especially beneficial for unique datasets. Concurrently, EvaNet acts as a neural network capable of learning and applying optimal augmentation strategies for any given task, democratizing data augmentation across varied deep learning challenges.

Implementing data augmentation is undeniably a critical step in curating robust deep learning models, and the availability of sophisticated tools and software simplifies this process for developers and researchers alike. From enhancing the volume and variety of datasets to ensuring models are exposed to a broader spectrum of data phenomena, these utilities play a pivotal role in the success of deep learning projects across multiple domains. Each tool, with its unique capabilities and focus areas, contributes to the overarching goal of achieving more accurate, reliable, and efficient models, fostering innovations that push the boundaries of what deep learning can achieve.

Challenges and Best Practices

Exploring the Challenges and Best Practices in Applying Data Augmentation

While the concept of data augmentation has been widely acknowledged for its role in improving the performance of deep learning models, its application is not without challenges. Delving into the intricacies of this process uncovers a set of obstacles that practitioners need to navigate. Moreover, adhering to best practices can significantly streamline this process, enhancing efficiency and outcomes in deep learning projects.

One of the primary challenges in applying data augmentation lies in determining the appropriate level of augmentation. Over-augmentation can lead to models that are too generalized, failing to capture the nuances of the data. This occurs when the augmented data diverges too significantly from real-world scenarios, rendering the model less effective for its intended purpose. Conversely, under-augmentation might not provide sufficient variability, leading to models that are still prone to overfitting. Striking the right balance is crucial but often requires trial and error, alongside a deep understanding of the problem domain.

Another obstacle is the computational cost associated with data augmentation. Generating and processing augmented data demands additional computational resources, which can extend training times and increase expenses, particularly for large datasets or complex augmentation strategies. This challenge necessitates careful planning and optimization to ensure that the benefits of data augmentation justify the additional costs.

The choice of augmentation techniques poses yet another challenge. With a vast array of methods available, from simple transformations like cropping and flipping to more sophisticated techniques involving GANs (Generative Adversarial Networks), selecting the most effective strategy can be daunting. The suitability of these methods can vary significantly across different projects and data types, requiring practitioners to possess a solid understanding of both the techniques and their data.

To mitigate these challenges, a set of best practices has emerged within the deep learning community. First and foremost, it’s recommended to start with simple augmentation techniques and gradually introduce complexity based on the model’s response. This iterative approach allows for the assessment of each technique’s impact on model performance, enabling more informed decisions.

Another best practice is to leverage domain knowledge. Understanding the context within which the model operates can guide the selection of augmentation strategies. For instance, in medical imaging, knowing which variations are clinically relevant can inform more effective augmentation choices.

Employing automated tools and libraries, such as Augmentor and Albumentations, can also alleviate some of the computational burdens. These tools not only streamline the augmentation process but also introduce efficiency through optimized algorithms, reducing the time and resources required.

Furthermore, continuous monitoring and evaluation of the model’s performance with the augmented data are crucial. This involves not just assessing accuracy but also looking for signs of overfitting or underfitting, enabling timely adjustments to the augmentation strategy.

In conclusion, while data augmentation presents a powerful method to enhance deep learning models, navigating its challenges demands a thoughtful approach. By adhering to best practices, such as starting simple, leveraging domain knowledge, using automation tools, and continuously monitoring model performance, practitioners can effectively harness the benefits of data augmentation. This not only improves model accuracy and reliability but also contributes significantly to the advancement of deep learning technologies.

Future Directions in Data Augmentation

Looking into the future of data augmentation in deep learning, the momentum it has gathered across various sectors suggests a roadmap teeming with innovation and expansion. As technology evolves, so do the strategies employed to enhance deep learning models. The next phase of data augmentation is anticipated to unfold through several key trends that promise to redefine how data enhances machine learning.

AI-Driven Data Augmentation Strategies: Future developments are poised to integrate artificial intelligence more deeply into the process of data augmentation. AI could automate the selection and application of augmentation techniques, tailoring them to the specific needs of the dataset and the learning task. This level of automation would streamline the preparation of data, making deep learning models even more effective and efficient. Cross-Domain Augmentation: The potential for cross-domain data augmentation is vast. Techniques that have proven successful in one area, such as image processing, might be adapted for use in unrelated domains, like text or audio. This cross-pollination of ideas could lead to the development of novel augmentation strategies that boost model performance in unexpected ways. Increased Focus on Synthetic Data: The generation of synthetic data is a promising avenue for data augmentation. By creating entirely new data points from existing datasets, deep learning models can be trained on a wider variety of situations than those present in the original data. This approach is particularly invaluable in fields where data is rare or expensive to collect. Advances in generative models, such as Generative Adversarial Networks (GANs), will play a significant role in the creation of high-quality, diverse synthetic datasets. Ethical and Bias Considerations: As data augmentation becomes more sophisticated, ethical considerations and the potential for bias in augmented datasets will come to the forefront. The deep learning community is expected to advocate for responsible data augmentation practices that promote fairness and mitigate bias. Tools and methodologies that audit and adjust augmented data to ensure diversity and representation will become essential components of the data augmentation toolkit. Integration with Active Learning: Data augmentation is likely to converge with active learning, a paradigm where models identify and learn from the most informative data points. By combining these approaches, deep learning systems could dynamically augment data in areas where the model’s performance is weak, leading to more rapid and targeted improvements. Augmented Reality and Virtual Environments: The use of augmented reality (AR) and virtual environments for data augmentation offers exciting possibilities. These technologies can generate complex, realistic scenarios for training deep learning models, especially in robotics and autonomous vehicle navigation. By simulating real-world conditions, AR and virtual environments can provide an endless supply of diversified training data.As we peer into the horizon of data augmentation in deep learning, it’s clear that the field is set for significant transformations. These forthcoming trends not only promise to enhance the capabilities of deep learning models but also to address some of the most pressing challenges in AI development. By embracing innovation and maintaining a vigilant approach to the ethical dimensions of data augmentation, the future holds the promise of more robust, accurate, and fair deep learning systems.

The landscape of data augmentation in deep learning is a testament to the unyielding pursuit of excellence in the field of artificial intelligence. By continuously pushing the boundaries through innovative techniques and applications, data augmentation not only enhances model performance but also paves the way for groundbreaking advancements. As we look towards a future where AI integrates seamlessly into every aspect of our lives, the strategic augmentation of data will remain a cornerstone in developing models that are not just accurate but are capable of understanding and interacting with the world in ways previously imagined only in the realm of science fiction. The promise of data augmentation lies not just in its current applications but in its potential to unlock new dimensions of machine intelligence, making the dream of truly intelligent systems a closer reality.

Emad Morpheus is a tech enthusiast with a unique flair for AI and art. Backed by a Computer Science background, he dove into the captivating world of AI-driven image generation five years ago. Since then, he has been honing his skills and sharing his insights on AI art creation through his blog posts. Outside his tech-art sphere, Emad enjoys photography, hiking, and piano.