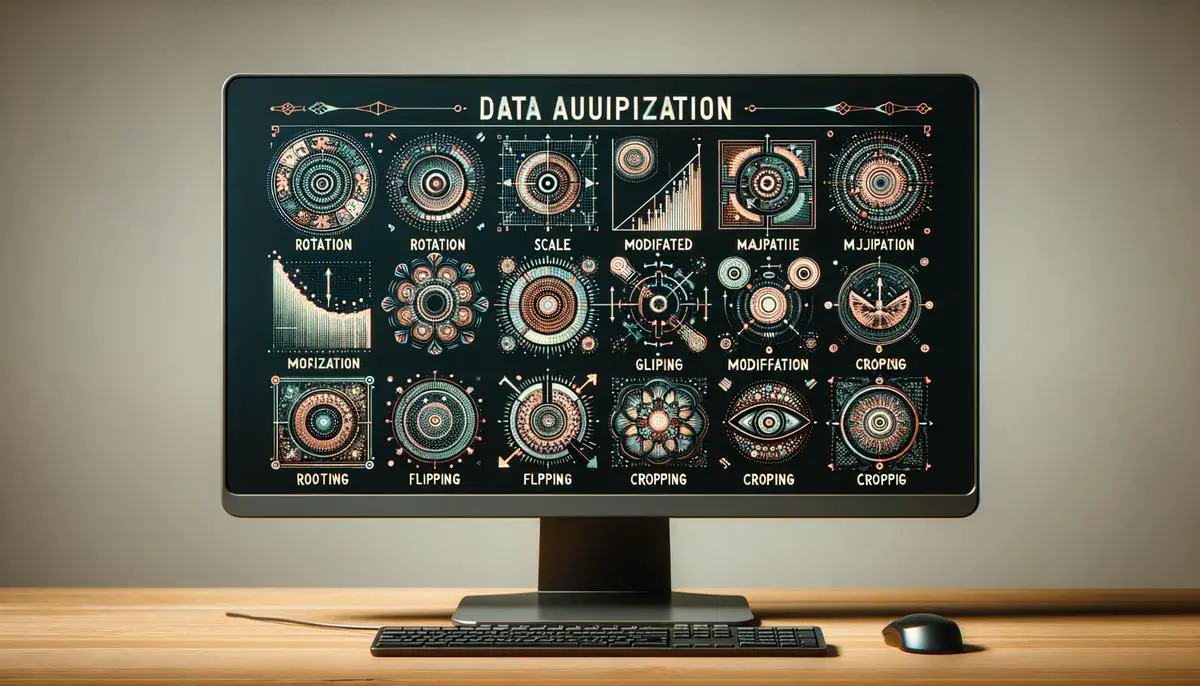

Exploring the landscape of machine learning, the method known as data augmentation emerges as a pivotal instrument in refining the accuracy and functionality of models. In a domain where the volume and variety of data play a crucial role, the challenge of sourcing vast datasets is a common obstacle. Data augmentation, therefore, presents itself as a transformative approach for practitioners, enabling the extension of dataset boundaries without the need to accrue new data. This introduction leads us into a detailed examination of how this technique reshapes the development and success of machine learning models.

Contents

Understanding Data Augmentation

Title: Understanding Data Augmentation in Machine Learning

Introduction: In the rapidly evolving world of machine learning, the technique of data augmentation stands out for its critical role in improving model accuracy and performance. Machine learning models require a vast amount of data to learn and make accurate predictions. However, acquiring such extensive datasets can be challenging and expensive. This is where data augmentation comes into play, serving as a game-changer for developers and data scientists.

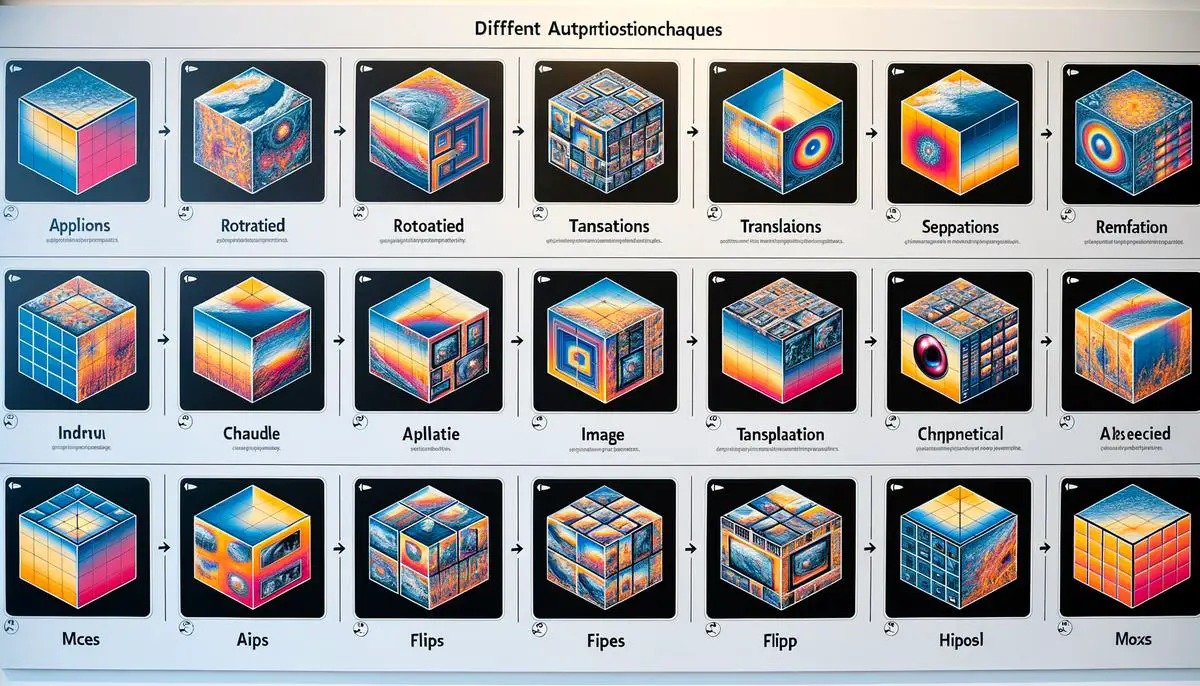

What is Data Augmentation? Data augmentation is a strategy used to increase the diversity of data available for training models without actually collecting new data. This technique involves making minor adjustments to the existing data to create a broader dataset. For instance, in the context of image recognition tasks, data augmentation might include rotating, flipping, or altering the colors of the images. The primary objective is to teach the model to recognize the same object under various conditions, thereby enhancing its ability to generalize from the training data to new, unseen data.

Why is Data Augmentation Crucial for Machine Learning Models?

- Enhances Model Robustness and Accuracy: By exposing the model to more variations of the data, data augmentation helps in building more robust and accurate models. It ensures that the model does not overfit to the limited characteristics of the training data but can generalize well to new data.

- Addresses Data Scarcity: In many cases, obtaining a large and diverse dataset is not feasible due to time, privacy, or resource constraints. Data augmentation artificially inflates the dataset with modified versions of the available data, helping overcome the challenge of data scarcity.

- Improves Generalization: Machine learning models should perform well on new, unseen data. Data augmentation introduces a variety of scenarios through artificial modifications, which helps the model to generalize better when it encounters real-world data that differs from the training set.

- Cost-Effective: Collecting and labeling new data can be a costly affair. Data augmentation provides a cost-effective solution by making the most out of the existing data. It allows for the creation of a comprehensive dataset for training without the need for significant additional investment.

Conclusion: Data augmentation is an indispensable technique in the toolkit of machine learning practitioners. By artificially expanding the dataset, it plays a pivotal role in enhancing the performance, accuracy, and generalizability of machine learning models. Whether dealing with images, text, or any other type of data, leveraging data augmentation can lead to significant improvements in model outcomes, proving its importance in the field of machine learning.

Implementing Data Augmentation with TensorFlow and Keras

Implementing Data Augmentation with TensorFlow and Keras in Python

Data augmentation is a powerful technique for enhancing the performance of machine learning models, especially in the realm of image recognition tasks. It serves to increase the diversity of your training set by applying various transformations to your original images, thus making your model more robust and less prone to overfitting. The following steps outline how to implement data augmentation using TensorFlow and Keras in Python, two of the most popular libraries in machine learning and deep learning.

Step 1: Set Up Your Python Environment

Firstly, ensure that you have Python installed on your system. Then, install TensorFlow and Keras by running the command `pip install tensorflow keras` in your terminal or command prompt. This will equip you with the necessary tools to begin implementing data augmentation.

Step 2: Import Required Libraries

Once installation is complete, proceed by importing the required libraries in your Python script. This includes TensorFlow and Keras, alongside other necessary libraries for handling images:

import tensorflow as tf

from tensorflow.keras.preprocessing.image import ImageDataGenerator

import numpy as np

import matplotlib.pyplot as plt

Step 3: Load Your Dataset

For this guide, we assume you have a dataset comprised of images. Load your dataset into the Python environment. If your dataset is already divided into training and test sets, ensure they are accessible for the augmentation process:

# Example code to load a dataset

(train_images, train_labels), (test_images, test_labels) = tf.keras.datasets.cifar10.load_data()

This code uses the CIFAR-10 dataset as an example. Replace it with code appropriate for loading your dataset.

Step 4: Initialize the ImageDataGenerator

TensorFlow and Keras provide the `ImageDataGenerator` class, designed explicitly for data augmentation. This class allows you to specify the types of transformations you want to apply to your images. Initialize an `ImageDataGenerator` with the augmentation transformations you desire:

data_augmentation = ImageDataGenerator(

rotation_range=20,

width_shift_range=0.2,

height_shift_range=0.2,

shear_range=0.2,

zoom_range=0.2,

horizontal_flip=True,

fill_mode='nearest'

)

The parameters `rotation_range`, `width_shift_range`, and others determine the degree of each transformation applied. Modify these values based on your specific needs.

Step 5: Apply Data Augmentation

With the `ImageDataGenerator` configured, apply it to your dataset. If you’re working with a batch of images, you can use the `.flow()` method. For demonstration, this guide will augment the training set:

# Assume train_images is your dataset to augment

augmented_images = data_augmentation.flow(train_images, batch_size=32)

Step 6: Visualize Augmented Images

To ensure that your augmentations are applied as expected, visualize some of the augmented images:

augmented_image_batch = next(augmented_images)

Plot the first image from the batch

plt.figure(figsize=(10, 10))

plt.imshow(np.uint8(augmented_image_batch[0]))

plt.axis('off')

plt.show()

This snippet fetches a batch of augmented images and displays the first image from that batch.

By following these steps, you’ve successfully implemented data augmentation on your dataset using TensorFlow and Keras in Python. This process can significantly improve the performance of your models, especially in tasks that benefit from a diverse and robust training set, such as image recognition.

Custom Data Augmentation Techniques

Moving on from the foundations laid in understanding data augmentation and its critical role in machine learning, particularly in enhancing image recognition tasks, we will now delve into the realm of custom data augmentation techniques. These innovative methods allow for more tailored adjustments to your dataset, going beyond the standard augmentations and enabling a deeper level of model training customization.

Custom Data Augmentation Techniques

Custom data augmentation involves creating your own unique augmentations that are not readily available in pre-existing libraries. This can be particularly useful when dealing with specialized datasets where conventional augmentation techniques may not suffice. For instance, in medical imaging, you might need very specific transformations that closely mimic real-world variations in the data.

How to Implement Custom Data Augmentation Techniques

- Identify the Need for Customization: Before diving into custom augmentation, examine your dataset closely. Understand the specificities of your data and the challenges it presents. Ask yourself whether existing augmentation techniques address these challenges adequately. If not, custom augmentation might be the path to follow.

- Write Custom Augmentation Functions: Utilize your programming skills to craft functions that perform specific augmentations. For example, you could write a function to simulate different lighting conditions or to artificially create occlusions. These functions will take an image as input, apply the transformation, and return the altered image.

- Integrate Custom Functions with Your Data Pipeline: Once you have your custom augmentations ready, the next step is to integrate them into your data preprocessing pipeline. If you’re using a library like TensorFlow or PyTorch, you can include your functions in the dataset preparation phase, ensuring each image goes through your custom augmentations.

- Test and Refine: After integrating your custom augmentations, thoroughly test your model’s performance. It’s crucial to monitor not just the accuracy but also how the model behaves with unseen data. Sometimes, overly aggressive augmentation can make the model learn unrealistic features. If necessary, refine your augmentation techniques to strike the right balance.

- Iterate and Expand: Data augmentation is an iterative process. As your model evolves and you collect more data, revisit your augmentation strategies. New challenges might emerge that require additional custom augmentations. Stay flexible and ready to expand your augmentation toolkit.

def adjust_lighting(img, intensity=0.5):

# Example function to adjust lighting

return img * intensity

Examples of Custom Augmentation Operations

To inspire your custom data augmentation journey, here are a few ideas that could be particularly useful in diverse scenarios:

- Geometric Distortions: Create functions to simulate more complex geometric transformations than the standard flips and rotations. For instance, non-linear distortions could simulate the effect of viewing objects through water.

- Synthetic Data Generation: For datasets with rare occurrences, consider generating synthetic data. Using techniques like Generative Adversarial Networks (GANs), you can create realistic images that augment your dataset effectively.

- Environmental Simulations: Write functions that simulate environmental effects such as fog, rain, or dust. For outdoor vision systems, these can be particularly useful in creating a robust model.

In conclusion, custom data augmentation is a powerful strategy to enhance your machine learning models’ performance, especially when dealing with unique or challenging datasets. By thoughtfully crafting and integrating custom augmentations, you can ensure that your models are not just accurate with the training data but also resilient and adaptive to the complexities of real-world scenarios. As you embark on this path, remember to maintain a balance, ensuring that augmentations improve the model’s generalizability without distorting the foundational truths of the data it learns from.

As our exploration of data augmentation and its advanced custom techniques concludes, it’s clear that this strategy is more than just an enhancement tool—it’s a necessity in the modern machine learning landscape. The ability to artificially expand and customize your datasets ensures that models are not only trained on more diverse data but are also capable of adapting to the intricacies of real-world applications. By implementing both standard and custom data augmentation techniques, developers and scientists set the stage for creating more robust, accurate, and generalizable machine learning models. This approach signifies a forward leap in overcoming data limitations and unlocking new potentials in machine learning advancements.

Emad Morpheus is a tech enthusiast with a unique flair for AI and art. Backed by a Computer Science background, he dove into the captivating world of AI-driven image generation five years ago. Since then, he has been honing his skills and sharing his insights on AI art creation through his blog posts. Outside his tech-art sphere, Emad enjoys photography, hiking, and piano.