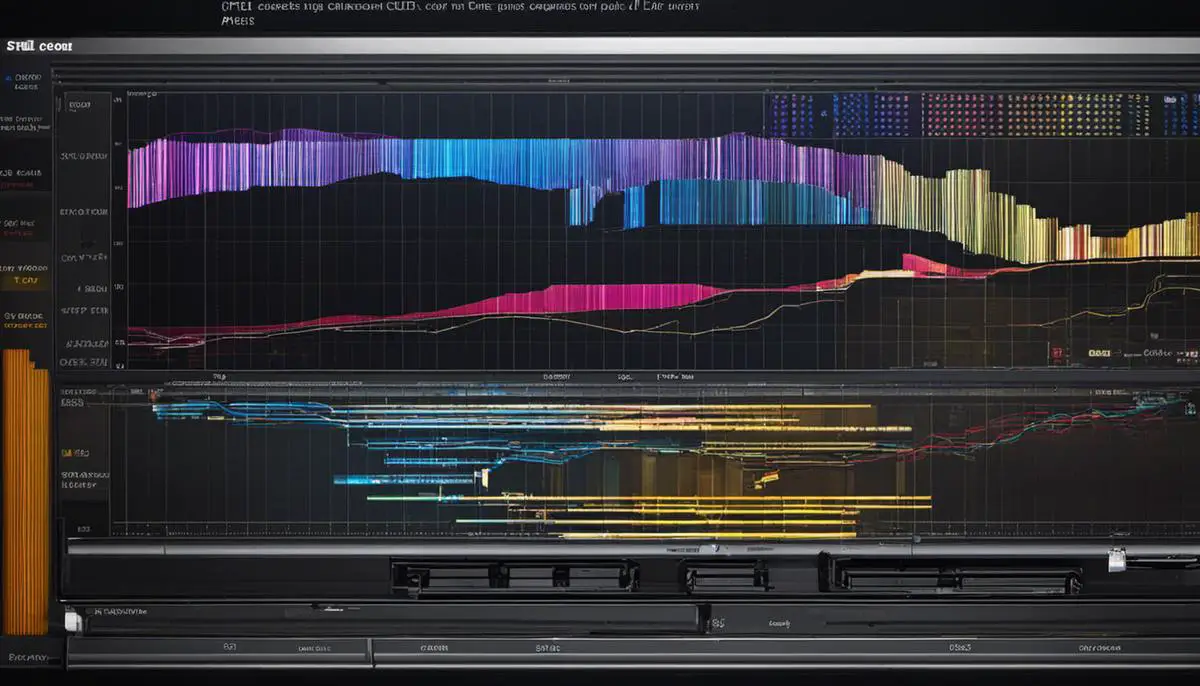

Unraveling the architectural complexities of Graphic Processing Units (GPUs) and Central Processing Units (CPUs) provides tremendous insight into the inner workings of contemporary computing. In this context, the role of these intricate pieces of hardware in driving forward the burgeoning field of artificial intelligence (AI) image generation is particularly pertinent.

As AI starts to permeate every sphere of contemporary life and work, performance optimization becomes crucial in the design and execution of AI tasks. Delving into the intrinsic capabilities of CPUs and GPUs and their application in AI image generation, this discourse seeks to uncover the strengths and shortcomings of each, whilst exploring the optimal balance between their synergistic collaboration.

Contents

Understand the basics: What are GPUs and CPUs?

GPUs vs. CPUs: Delineating Their Unique Features and Roles in Computing Tasks

In the dynamic world of computing, understanding hardware components’ specific functions is instrumental to optimize performance and outcomes. Two essential elements in this arena are the Graphics Processing Unit (GPU) and the Central Processing Unit (CPU). This article demystifies these components, exploring their differences and how their distinctive features contribute to various computing tasks.

CPUs and GPUs: A Brief Outline

The heart of a computer is undoubtedly the CPU. As the primary component responsible for executing most of the commands from a computer’s software and hardware, these high-speed semiconductors are typically dual-core (two processors in one) or quad-core (four processors in one). These cores are proficient in quickly switching among tasks, offering multitasking capabilities, and executing sequential series of instructions very efficiently.

On the other hand, a GPU is a specialized electronic circuit designed to swiftly manipulate and alter memory to accelerate the creation of images for a frame buffer intended for output to a display device. GPUs are phenomenal at running hundreds or thousands of identical calculations simultaneously—an ability that places them at an advantageous position for performing complex mathematical calculations commonly associated with 3D graphics and machine learning.

Decoding Their Distinct Features

CPUs, with their few cores optimized for sequential serial processing, are akin to having a few highly skilled warriors in a battle. In contrast, GPUs, with their hundreds of cores designed for parallel processing of data, are akin to having a gigantic army of less specialized warriors. Essentially, CPUs are optimized for tasks requiring high single-thread performance, while GPUs are optimized for workloads requiring massive parallelism.

Zooming in on Different Computing Tasks

In a computing scenario, both CPUs and GPUs perform different tasks due to their architectural differences. Take gaming, for instance. While CPUs handle the game’s logic, such as AI and controlling NPCs (non-playable characters), GPUs manage graphic rendering, ensuring seamless visualization and gameplay.

In scientific computationally intensive applications, the parallel architecture of a GPU makes it significantly superior. Therefore, CPUs are ideal for single-threaded tasks that require high speeds and quick responsiveness, such as operating systems and most application software. However, for simultaneously running diverse tasks, like those needed for video encoding and decoding, image and signal processing, or running neural network algorithms, GPUs hold the edge.

In the realm of artificial intelligence (AI) and machine learning (ML), where complex matrix operations are required, GPUs have demonstrated unprecedented speed, thanks to their parallel computing capabilities. The architectural advantages of GPUs allow for quicker, more efficient data processing, crucial in AI and ML applications where time is literally of the essence.

In conclusion, when navigating the arenas of computational tasks, understanding the distinct features of CPUs and GPUs and their specific roles helps to optimize technology choices and outcomes. These two vital components, with their unique capabilities, coordinate in synergy to produce the digital world that end-users explore every day. A proficient techie knows how to leverage these differences effectively, maximizing system performance and ultimately harnessing the true power of computing.

How GPU contributes to AI image generation

Moving on from that concrete base of understanding, it’s pertinent to delve deeper into the pivotal role GPUs play in AI image generation and how they potentially optimize this process.

Artificial Intelligence (AI) has rapidly integrated itself into virtually every realm due to its vast and varied applications. One profound domain is AI image generation – the process of training machines to generate new and original images using pre-existing datasets. However, such compute-intensive applications require not just any run-of-the-mill tools, but specifically the graphic processing units (GPUs).

First, it helps to understand why GPUs are a formidable force in AI image generation. The answer lies essentially in their inherent architecture and functionality: parallel processing. GPUs possess numerous minor cores that perform tasks concurrently, unlike a CPU’s few, powerful cores that work sequentially. Consequently, a GPU can create thousands of new images simultaneously, significantly boosting the speed, efficacy, and scalability of AI image generation.

Furthermore, GPU accelerators are designed for computationally intensive, data-heavy workloads. Therefore, they excel in tasks involving elaborate mathematical calculations, such as those pivotal in AI technology. Image generation, for instance, demands extensive calculations to analyze and learn patterns from large image sets, and then generate new images.

The other definitive advantage GPUs hold over CPUs in AI image generation is the ability to handle large datasets and network structures. With an accelerated memory bandwidth, GPUs can store and process larger datasets, involving high-resolution images, with ease.

Next, it’s critical to understand the role played by specific GPU features in optimizing the AI image generation process. NVIDIA, a titan in the realm of GPUs, provides tools like CUDA (Compute Unified Device Architecture), a parallel computing platform and application programming interface. CUDA allows developers to use NVIDIA GPUs to lead computations traditionally handled by CPUs, effectively optimizing computational speed and efficiency in AI applications.

Moreover, developers use Tensor Cores, specifically designed for AI applications by NVIDIA. These highly specialized cores perform mixed-precision matrix multiplication and accumulation operations, enabling enormous boosts in throughput while preserving the accuracy essential in AI image generation.

Finally, the industry widely uses programming interfaces like OpenCL (Open Computing Language) and OpenGL (Open Graphics Library) that can leverage GPUs for processing tasks. These allow AI developers to write applications that scale across diverse platforms, optimizing computation and enriching AI image generation processes.

In conclusion, the fascinating world of AI image generation owes a major part of its success and growth to the powerful capabilities of GPUs. Optimized for computationally intensive workloads, equipped to handle large datasets and network structures, and backed by specific GPU features and programming interfaces, GPUs are undeniably pivotal in optimizing the AI image generation process. It only emphasizes that the choice between CPUs and GPUs extends beyond mere computing abilities – it’s more about finding the perfect fit for the task at hand.

Consideration of CPUs in AI image generation

Indeed, GPUs are mastering the game of AI image generation today. Its architecture is perfectly suited to compute the intense matrix and vector calculations that constitute the foundation of deep learning – the heart of AI image generation.

GPUs utilize SIMD (Single Instruction, Multiple Data) architectural design that allows them to perform multiple operations concurrently. Such parallelism enhances GPU’s capabilities in projects involving large volumes of unstructured data processed simultaneously – a prerequisite for efficient AI image generation.

Particularly in deep learning models, GPUs are poised to shine further. One cannot ignore the immense strides made in GPU technology such as CUDA – a parallel computing platform and application interface by Nvidia, or Tensor Cores – specialized hardware designed for training deep learning networks, both of which have sharply reduced processing times, radically enhancing image generation speed.

However, the CPU isn’t perched on the sidelines. It’s not game-set-match in favor of GPUs. The CPU, despite its perceived disadvantages, continues to play a vital role within AI image generation.

CPUs come equipped with better flexibility and precision and are versatile in dealing with complex logic and decision-making tasks. Their sophistication in executing series of instructions gives them an edge over GPUs in maintaining the relational and narrative flow crucial to some AI image-related tasks such as high-level reasoning, strategic planning, or tactical decision-making – areas where GPUs usually stumble.

Moreover, in terms of system topology, CPUs are the system’s “brain,” directing task routing, memory allocation, and data handling amongst different hardware components. Their centralized layout makes them adept at ensuring a smooth, efficient workflow, even while integrating the powerful, parallel processing abilities of GPUs.

The choice between CPU and GPU consequently isn’t a binary one; it’s more a matter of identifying the one that best integrates with the specific characteristics of your AI image generation project. The decision hinges on factors such as data size, complexity of tasks and algorithms, how many tasks need to be processed simultaneously, and the necessity of sequential logic.

As we stride forward into the exciting landscape of AI image generation, rather than viewing CPUs and GPUs as competitors, it’s more constructive to envisage them as complementary technologies, each boasting strengths that fill the gaps of the other. Together, they can harmonize to create a powerful machine that optimizes AI image generation processes. Therefore, categorically deeming CPUs outclassed by GPUs may not render the full picture, because CPUs still hold a relevant role in certain aspects of AI image generation, and vice versa.

The tech world thrives on evolution – what may stand ‘outclassed’ today could return with groundbreaking features tomorrow. Shift your focus on the symbiotic relationship between CPUs and GPUs instead of the ongoing war between them. Remember, technology is a tool. The real winners will be those who wield it with wisdom.

The optimum balance: CPU-GPU collaboration in AI image generation

Unleashing the Combined Power of CPUs and GPUs in AI Image Generation

Harnessing the collaborative capabilities of Central Processing Units (CPUs) and Graphics Processing Units (GPUs) has vaulted artificial intelligence (AI) image generation into the future. This collaborative computational approach, leveraging both technologies’ unique strengths, maximizes performance, and efficiency. In the context of AI image generation, CPUs and GPUs are not rivals, but powerful allies unlocking previously unattainable possibilities.

The prowess of a CPU lies within its flexibility and precision, excelling particularly in sequential computation tasks. CPUs shine in complex logic-based operations and decision-making tasks, presenting an irreplaceable value. The superior branching capabilities of CPUs allow for efficient handling of diverse logic trees, essential in tasks requiring multiple decisions. Furthermore, CPUs are crucially responsible for managing system topology, routing tasks, and data distribution to their GPU counterparts.

In contrast to the broad-scope capabilities of CPUs, GPUs bring unparalleled performance in tasks requiring concurrent processing. This performance advantage is rooted in the Single Instruction, Multiple Data (SIMD) architecture of GPUs. This design allows for the simultaneous processing of large datasets, perfect for pacing-intensive image generation tasks in AI.

Advancements such as CUDA cores and Tensor Cores have dialed up the GPUs’ potential. CUDA, an API by Nvidia, facilitates software development, allowing developers to squeeze every bit of performance out of Nvidia-based GPUs. Tensor Cores, on the other hand, are specialized circuits designed specifically for AI and Deep Learning tasks. Their functionality enhances the precision of computations, delivering vastly improved performance.

In AI image generation, the parallel processing capabilities of GPUs handle calculation-heavy tasks, while CPUs execute the tasks needing intricate logic. This division of labor creates an effective symbiosis, crucial for optimal performance in AI image generation processes.

Choosing wisely between CPUs and GPUs can significantly impact the success of AI image generation projects. Factors such as the task’s nature, the extent of data, and task complexity are instrumental in the decision-making process. CPUs and GPUs possess distinct capabilities but should not be viewed as interchangeable. Instead, consider each technology for the unique benefits they offer, and use them in coordination to unlock their combined potential in AI image generation.

In the ever-evolving realm of technology, the importance of staying abreast of advancements in CPUs and GPUs is paramount. As their technology continues to advance, we can expect even more efficient and powerful synergies in AI-image generation tasks. A thorough understanding of the current capabilities and potential future advancements in CPUs and GPUs technology is an invaluable asset for aficionados navigating the accelerating pace of the AI revolution.

Harnessing the combined power of CPUs and GPUs in AI image generation tasks presents an exciting frontier, blending art and science, human creativity, and machine efficiency. As we delve deeper into the universe of artificial intelligence, this cooperative approach towards technology will undoubtedly lead to unprecedented discoveries and innovation.

Embrace the revolution – the world of AI-driven image generation is beholden to the combined power and adaptability of CPUs and GPUs. The future is not only about harnessing technology but wielding it wisely. The tools are in our hands; it’s time to craft the future.

As computing power continues to develop at an astonishing rate, a nuanced understanding of the interplay between CPU and GPU capabilities becomes even more vital in the realm of AI image generation. GPUs, through their superior parallel processing aptitudes, often outperform CPUs in this space.

However, CPUs maintain their importance for tasks requiring sequential processing or where budgetary and energy considerations come into play. It is this coexistence and carefully calibrated interplay of GPUs and CPUs that can pave the way towards a future where AI image generation is optimally efficient, balancing superior processing power, cost-effectiveness, and energy efficiency. The boundless possibilities of AI beckon us forward, with the harmonious CPU-GPU collaboration serving as a powerful engine in this exciting journey.

Emad Morpheus is a tech enthusiast with a unique flair for AI and art. Backed by a Computer Science background, he dove into the captivating world of AI-driven image generation five years ago. Since then, he has been honing his skills and sharing his insights on AI art creation through his blog posts. Outside his tech-art sphere, Emad enjoys photography, hiking, and piano.