Deep Learning, known for its powerful methodologies for modeling complex phenomena, boasts a variety of architectures, principles, and strategies. One of these is the concept of stable diffusion, a significant contributor to the efficiency and feasibility of deep learning models.

This paper dives deep into the intersection of Stable Diffusion Processes and Deep Learning, focusing on how the stability of diffusion processes benefits the expansive field of machine learning. It depicts the architectural marvels of deep learning, expresses the essence of stable diffusion, and delineates their intertwined relationship.

Practical applications in diverse industry sectors and further exploration into the future of this field also form integral parts of this investigative journey.

Contents

- 1 Fundamentals of Stable Diffusion Processes

- 2 Understanding Deep Learning

- 3 The Interface of Stable Diffusion and Deep Learning

- 3.1 Understanding Stable Diffusion Deep Learning

- 3.2 Stability: A Key Requirement in Deep Learning

- 3.3 The Challenge of Ensuring Stability in Deep Learning Models

- 3.4 Ways to Achieve Stability in Diffusion Processes

- 3.5 Comparison with Other Stability Strategies in Deep Learning

- 3.6 Deep Learning Research: The Importance of Stable Diffusion

- 4 Real Life Applications of Stable Diffusion Deep Learning

- 5 Future Prospects and Challenges of Stable Diffusion Deep Learning

Fundamentals of Stable Diffusion Processes

Defining Stable Diffusion Processes

Stability in a diffusion process refers to its state over time. A stable diffusion process is one where the intensity of the fluctuation around the mean decreases as time progresses, resulting in the system gradually reaching a steady state. This is a process that is commonly seen in high-dimensional systems and has been extensively studied in the field of mathematical physics.

Understanding Diffusion in Machine Learning

In machine learning, diffusion plays a crucial role as it transforms one data distribution into another. This transformation can be seen as a process where each data point diffuses over time, following a certain trajectory, until it reaches another data point. Diffusion processes are stochastic, implying they involve randomness. The random movement of data points plays a key role in their movement from the initial to the final state.

Diffusion in Deep Learning

When it comes to deep learning, the concept of diffusion becomes even more fundamental. Particularly, a stable diffusion process helps to provide a solid basis for building stable deep learning models. In the field of deep learning, stable diffusion refers to the subfield that explores how to use the concept of a stable diffusion process for training deep learning models and understanding the learning dynamics in these models.

Stable Diffusion Process for Training

Deep learning models leverage the stability of the diffusion process to enable gradual, step-by-step changes in the network weights during the training phase. Each training sequence can be regarded as a diffusion process, starting from the initial random weights to the final learned weights. Researchers have found that this gradual change in network weights over iterations, which mimics the concept of a stable diffusion process, results in better generalization and robustness of the models.

Stability in Diffusion Models

Stability is an important aspect of diffusion models in deep learning. A stable diffusion process refers to the ability of a trained model to produce reliable predictions when there are small perturbations in the input data. Such models exhibit a reliable degree of predictability and consistent performance, making them applicable in real-world scenarios where data can often vary.

Stable Diffusion Deep Learning

In Stable Diffusion Deep Learning, researchers integrate the principles of stable diffusion processes into the ecological design of deep learning architectures. This integration provides a way of guiding the diffusion of information through the deep learning model, thus influencing the stability of learning. By leveraging stable diffusion processes, deep learning models can organically integrate stability into the model training process, enhancing the robustness and generalization of the model.

Significance of Stability in Diffusion Processes

Acknowledging the significance of stable diffusion processes is crucial. Unstable processes can cultivate unreliability and unpredictability in models, consequently leading to erroneous results. By incorporating stability, a model’s robustness and its ability to generalize across diverse inputs and conditions are enhanced. Therefore, in the field of deep learning, the utility of a stable diffusion process shines through its contribution to robust, consistent, and well-generalized models.

Understanding Deep Learning

Deep Learning: An Integral Component of Machine Learning

Deep learning operates under the broader domain of machine learning and artificial intelligence (AI). Predominantly driven by artificial neural networks, especially deep neural networks (DNNs), deep learning diverges from conventional AI approaches that depend heavily on human-programmed rules and algorithms. Armed with the capacity to learn from extensive volumes of data, deep learning systems progressively become more autonomous and precise.

Types of Deep Learning Architectures

CNNs or Convolutional Neural Networks and RNNs, Recurrent Neural Networks, are two prominent deep learning architectures largely employed in applications that involve images, audio, text, or time-series data.

- CNNs effectively process image data and recognized patterns in them by dividing them into a grid of pixels.

- RNNs, on the other hand, are well-suited for sequential data as they are capable of remembering previous data in the sequence and using this historical data to influence future outputs.

Numerous fields, including autonomous vehicles, healthcare, finance, voice-assistant technology, and more, have started leveraging deep learning for improved functionalities and solutions.

Introduction to Stable Diffusion Processes

Stable diffusion processes extend the reach of deep learning and have become a significant area of research. The common phrase “a picture is worth a thousand words” holds for deep learning, where an image can contain a vast amount of information and be used to generate a far-reaching range of outputs. Stable Diffusion is a process used in deep learning to generate new, synthetic data from existing data in a stable and controlled manner.

Stable Diffusion in Deep Learning

In the context of deep learning, stable diffusion processes play a significant role in generative models. These models are capable of creating new data instances similar to the input they were trained on, essentially ‘dreaming up’ new content. One of the most popular types of generative models is the Generative Adversarial Network (GAN), where two AI models work together to create data.

However, GANs are famously difficult to train, due to their adversaries – the generator and the discriminator – often failing to reach equilibrium. They are also not great at capturing long-range dependencies or memory from the data.

Stable diffusion processes are introduced to tackle these problems. They take an entirely different approach by starting with a random blob of noise and slowly shaping it into a synthetic piece of data, via a process of continuous, tiny nudges, which is guided by a deep learning model. Over time, the random noise is ‘diffused’ into a recognizable piece of data. These diffusion models, such as Denoising Diffusion Probabilistic Models (DDPMs), can generate precise, high-quality data, and they scale well across different dimensions of data.

Introduction to Stable Diffusion in Deep Learning

Stable diffusion has emerged as an integral component in the evolution of deep learning, enabling the development of more intricate models. As we continue to witness an exponential growth in data – both in terms of its volume and complexity – deep learning techniques employing stable diffusion are entrusted with the important task of effectively handling this influx of data and generating insightful outcomes. The potential applications of this innovative technology span a wide variety of domains, promising advancements in improving living standards, enhancing disease diagnosis procedures, and propelling space exploration.

The Interface of Stable Diffusion and Deep Learning

Understanding Stable Diffusion Deep Learning

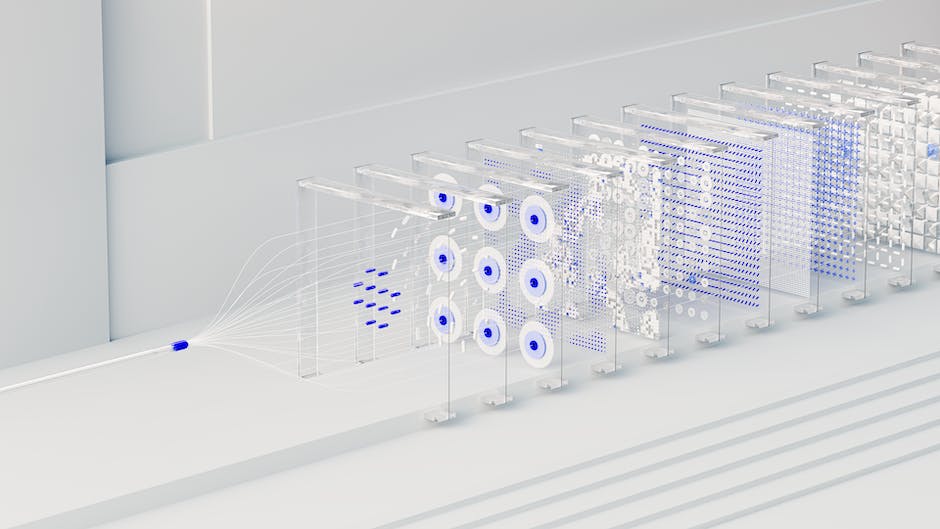

Deep learning’s cornerstone, the concept of stable diffusion, explicates how information flows within a neural network. Central to deep learning algorithms is the orchestrated and accurate dissemination of data through innumerable layers of computation nodes.

This distribution process is akin to diffusion, wherein the input data gradually disperses across the network, with each layer refining it into an increasingly abstract representation. Stability here portrays the system’s characteristic wherein minor alterations in either input data or model parameters only bring about small variations in the output.

An absence of stability introduces the risk of minor inconsistencies causing large-scale changes in the output, leading to an erratic and unpredictable system behavior.

Stability: A Key Requirement in Deep Learning

Stability is crucial in deep learning models primarily due to its role in generalization, i.e., the model’s ability to apply knowledge learned from the training data to unseen test data. A stable model will consistently provide reliable predictions even when presented with new data.

In contrast, an unstable model might perform exceedingly well on the training data, but poorly on new test data — a phenomenon known as overfitting. Therefore, achieving stability in the diffusion process helps to enhance the robustness of deep learning models.

The Challenge of Ensuring Stability in Deep Learning Models

Ensuring stability in deep learning models is not straightforward due to the complexity of these models. An increase in the number of layers, also known as model depth, can increase model capacity and performance. However, it also increases the difficulty of training and the risk of instability.

As information propagates through deep layers, it can undergo dramatic amplification or decay, leading to exploding or vanishing gradients problem respectively. These can make the model unstable and learning difficult.

Ways to Achieve Stability in Diffusion Processes

There are several strategies for achieving stability in deep learning models’ diffusion processes. Normalizing the inputs and outputs of each layer (such as by batch normalization or layer normalization) can prevent extreme values and keep the signal in a reasonable range. Initialization techniques can ensure weights and biases start in a state conducive to learning, while specific types of regularization can prevent overfitting. Gradually adjusting the learning rate during training, known as learning rate scheduling, can avoid drastic changes. Lastly, advanced optimization algorithms such as RMSprop or Adam can improve stability.

Comparison with Other Stability Strategies in Deep Learning

Stable diffusion is often compared with other strategies aimed at improving stability in deep learning. Techniques like dropout regularization, early stopping, and adding noise to inputs also contribute to model stability. Of course, these methods are not mutually exclusive and can be used together. Stability in the diffusion process ensures that information can propagate through deep networks without degradation, while other strategies often work to prevent overfitting or ensure robustness to minor variations.

Deep Learning Research: The Importance of Stable Diffusion

Stable diffusion deep learning is rapidly gaining attention in the world of research due to its considerable impact on model performance and generalization. As deep learning technologies continue to mature and develop, the role of stable diffusion becomes increasingly critical, putting it in the spotlight as a means to ensure superior models.

Real Life Applications of Stable Diffusion Deep Learning

Understanding Stable Diffusion in the Context of Deep Learning

In the realms of machine learning and artificial intelligence (AI), deep learning is a sophisticated subset tasked with replicating the learning process of the human brain, thus providing machines with the capability to learn from examples in data sets. Stable Diffusion serves as a significant aspect of a category in deep learning known as generative modeling.

The aim of generative models is to understand and learn the inherent data distribution from the training set so that new, equally valid data points can be created. Stable diffusion deep learning utilizes a stochastic differential equation (SDE) to mirror diffusion, analogous to particles moving from an area of high concentration to one of low concentration to achieve equilibrium.

The purpose is to construct a generative model with the capacity to create new sample data points derived from the original learned data.

Stable Diffusion in Healthcare

Stable diffusion deep learning plays an essential role in the healthcare sector, enabling innovative solutions. For instance, stable diffusion deep learning can be employed to generate synthetic patient data that mimics real-world patient records while maintaining data privacy as the generated data does not correspond to any real individual patient.

Besides, it can be used to model disease progression, helping physicians predict the patient’s future health status based on past medical records, genetic, socio-economic, and behavioral data.

Stable Diffusion for E-Commerce

In the e-commerce sector, stable diffusion deep learning has a significant role in recommendation systems. Recommendation systems often rely on generative models that can learn from the buying patterns of customers and generate new purchase sequences or behavior. With stable diffusion, these systems can create more realistic and diverse recommendations, enhancing the shopping experience for the customers.

Stable Diffusion in Autonomous Vehicles and Transportation

One of the critical applications of stable diffusion deep learning is in the transportation industry, particularly autonomous vehicles (AVs). Autonomous vehicles generate and consume substantial quantities of data, which can be employed to enhance their operations.

For instance, AVs can use stable diffusion deep learning to learn from various driving situations and generate simulated scenarios to improve their decision-making algorithms. In route optimization, stable diffusion can help model traffic patterns and predict congestion points, contributing to more efficient route planning.

Stable Diffusion for Social Media and Digital Marketing

On social media platforms and in digital marketing, generative models based on stable diffusion deep learning can be used to create realistic content for various purposes. In digital advertising, these models can generate ad content tailored to individual user preferences. On social media platforms, they can be used to create personalized content recommendations that enhance user experience and engagement.

The burgeoning field of Stable Diffusion Deep Learning is attracting substantial attention as it provides a myriad of applications across various sectors. It stands out due to its unique capability to generate high-grade, diverse, and realistic data samples. The inherent potential of this technology, if leveraged in the right manner, has the potential to facilitate unparalleled advancements in the functioning and outputs of products and services across numerous businesses and industries.

Future Prospects and Challenges of Stable Diffusion Deep Learning

Delineating Stable Diffusion Deep Learning

As a distinct offshoot of machine learning and artificial intelligence, Stable Diffusion Deep Learning utilizes stochastic differential equations as well as noise-contrastive estimation for training models. It provides a viable framework to model intricate data distribution, starting from a simplistic prior distribution to complex posterior distribution through the integration of diffusions and reverse-time neural networks.

This stands in contrast to conventional neural networks, which emphasize reducing the error on training sets. Instead, a diffusion model optimizes the similarity between the generated data and the training data in a consistent, reliable manner.

Advancements in Stable Diffusion Deep Learning

Stable Diffusion Deep Learning has the potential to revolutionize several domains. In particular, it shows promise for generative modeling where it can generate new data instances that resemble the training data.

Predictive analytics, which covers everything from stock market forecasts to weather prediction and demand planning, is also expected to greatly benefit from the integration of Stable Diffusion Deep Learning models. Computer Vision, a field where models are trained to understand image and video data, also stands to gain from the advancements in this technology.

Challenges in Stable Diffusion Deep Learning

While the potential applications of Stable Diffusion Deep Learning are vast, the field faces several potential challenges. One of the biggest hurdles is the high computational cost associated with training these models. Stable Diffusion models require performing operations over long time scales which can be computationally expensive and time-consuming, especially for larger and more complex data sets.

Secondly, the quality and amount of training data available is also critical. Since these models learn from data, they require large datasets for training. Poor quality or insufficient data may result in inaccurate or biased models.

Finally, another challenge pertains to the interpretability of these complex models which may hinder the adoption of these technologies in fields where understanding the decision-making process is important, such as healthcare and finance.

Considerations for the Future

Going forth, techniques that can reduce the computational demands of the models would be valued. Furthermore, effective methods to verify and validate the models, as well as techniques to regulate bias and ensure fairness, will be crucial for the implementation of Stable Diffusion Deep Learning models in the real world. As the field of Stable Diffusion Deep Learning advances, it is expected to continue pushing the boundaries of machine learning and pave the way for more sophisticated applications of artificial intelligence.

The exploration of stable diffusion deep learning unfolds a myriad of possibilities, pointing towards a future teeming with innovation and advancement. The high stakes challenges notwithstanding, the promise of stability and practical applications of this philosophy in the real-world holds the potential to revolutionize various industries.

As technology continues to advance and evolve, the potential for increased utilization and improvements in stable diffusion deep learning beckons. The evolution of this prominent field of machine learning allows it to adapt, address challenges effectively, and seize incredible opportunities, subsequently leading to significant strides in artificial intelligence as a whole.

Emad Morpheus is a tech enthusiast with a unique flair for AI and art. Backed by a Computer Science background, he dove into the captivating world of AI-driven image generation five years ago. Since then, he has been honing his skills and sharing his insights on AI art creation through his blog posts. Outside his tech-art sphere, Emad enjoys photography, hiking, and piano.