The world of algorithms is as extensive as it is fascinating, holding a gamut of options that cater to different solutions and challenges.

A key category of such systems is diffusion algorithms, known for their utilization in various fields from telecommunications to machine learning, personifying versatility.

This exploration delves into the intricate workings and core principles of these algorithms, with a particular emphasis on the aspect of stability.

We discuss different stability approach strategies, conduct an in-depth review of select stable diffusion algorithms, and perform a comparative analysis of these systems based on various criteria such as stability, efficiency, complexity, and application scope.

Furthermore, we will gaze into the future to decipher trends and impending advancements in the realm of stable diffusion algorithms, charting potential avenues for growth and development.

Contents

- 1 Overview of Diffusion Algorithms

- 2 Approaches to Stability in Diffusion Algorithms

- 3 Review of Key Stable Diffusion Algorithms

- 3.1 Diving into the World of Stable Diffusion Algorithms

- 3.2 Least-Mean Square (L-MS) Algorithm

- 3.3 Adaptive Diffusion Least Mean Square (AD-LMS) Algorithm

- 3.4 Distributed Stochastic Gradient (DSG) Algorithm

- 3.5 Normalized Least Mean Square (NLMS) Algorithm

- 3.6 Incremental Least Mean Square (inc-LMS) Algorithm

- 4 Comparative Analysis of Stable Diffusion Algorithms

- 4.1 Digging Deeper into Stable Diffusion Algorithms

- 4.2 Types of Stable Diffusion Algorithms

- 4.3 Efficiency of Stable Diffusion Algorithms

- 4.4 Stability of Stable Diffusion Algorithms

- 4.5 Complexity of Stable Diffusion Algorithms

- 4.6 Application Scope of Stable Diffusion Algorithms

- 4.7 A Detailed Comparison of Stable Diffusion Algorithms

- 5 Trends and Future Perspectives on Stable Diffusion Algorithms

- 5.1 An In-Depth Look at Stable Diffusion Algorithms

- 5.2 Comparative Analysis of Stable Diffusion Algorithms

- 5.3 Current Trends in Stable Diffusion Algorithms

- 5.4 The Future of Stable Diffusion Algorithms

- 5.5 Challenges in Developing Stable Diffusion Algorithms

- 5.6 Impact of Research on Future Algorithm Development

Overview of Diffusion Algorithms

Analyzing Stable Diffusion Algorithms

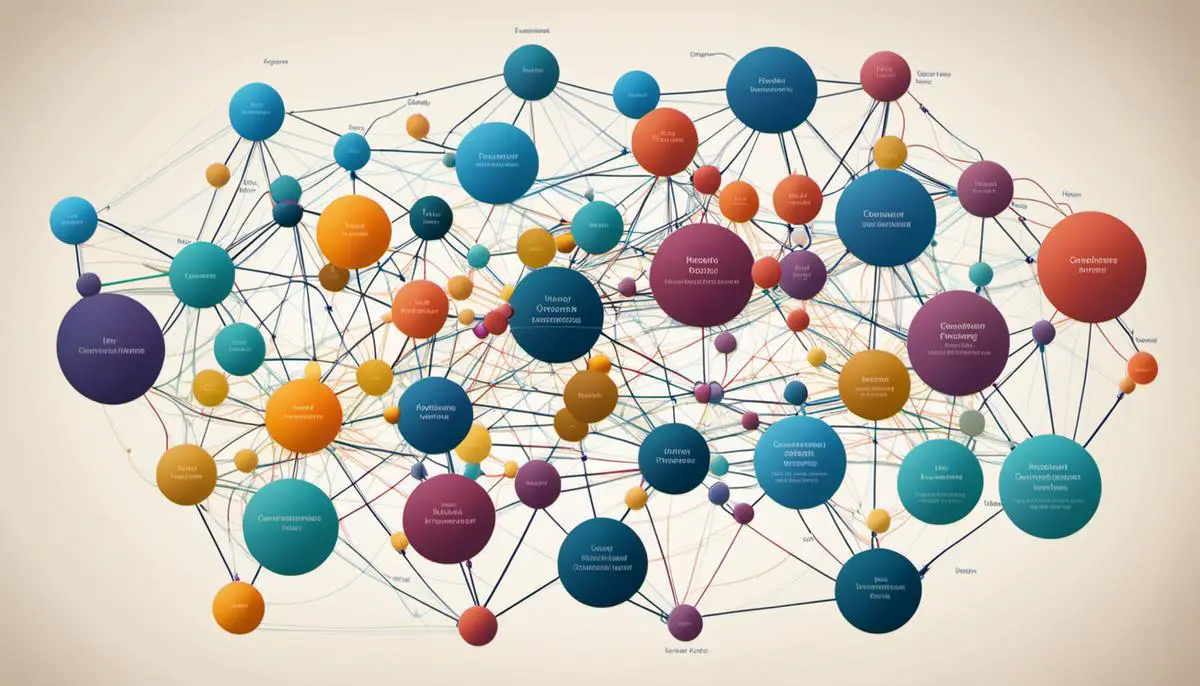

Diffusion algorithms represent a distinct class of computational tools that mimic the process of diffusion evident in nature. Originating in physics, the algorithms simulate the way particles disperse in a certain medium over time, irrespective of the medium’s density, temperature, or other variables.

This technique of algorithm development has been applied across various fields, including computer science, information technology, data mining, and image processing, owing to its inherent capacity to distribute information efficiently in every direction.

For instance, in computer networking, diffusion algorithms help maintain steady network traffic, and in social media targeting, they assist in spreading targeted ads across the network, thereby controlling data flow and spread.

How Diffusion Algorithms Work

The fundamental principle behind diffusion algorithms is modelling the process of diffusion, where particles move from an area of high concentration to an area of low concentration. This movement continues until a state of equilibrium is reached. This process is simulated in the algorithms, which use random walks to spread information or perform computations.

In these computations, “particles” can represent pieces of information, data values, or even solutions to a given problem. Through the process, these particles propagate throughout applicable paths, eventually reaching a stage of balance or an optimal solution.

Applications of Diffusion Algorithms

In the realm of technology, diffusion algorithms offer several inherent strengths and strategic advantages. In networking, for instance, these algorithms contribute to the stability of network traffic by evenly and efficiently distributing data packets, preventing network congestion or bottlenecking. This ensures a smoother and steady data flow, thereby enhancing overall operational efficiency.

In machine learning, diffusion algorithms form the basis of numerous adaptive learning and decision-making systems. These algorithms help bots “learn” over time, enabling them to react appropriately in various situations, thereby contributing to the advancement of AI and robotic systems.

In image and video processing, diffusion algorithms play a central role in space-time video processing and image restoration, where they improve quality by diffusing or spreading the pixel-intensities across frames.

In social media, diffusion algorithms support viral marketing efforts by supporting the rapid and extensive spread of targeted advertising or promotional content.

Limitations of Diffusion Algorithms

Yet like any other technology, diffusion algorithms possess some limitations. The primary challenge with these algorithms lies in their dependency on the structure of the problem at hand.

Precisely, the efficiency of these algorithms can significantly vary based on the interconnectedness and weight distribution of the network or the problem graph. In addition, in larger networks, diffusion algorithms could suffer from slow convergence, which may delay the attainment of the final optimal solution.

Investigating Stable Diffusion Algorithms: A Comparative Analysis

The different performance capabilities brought forth by varying diffusion algorithms across a range of applications necessitates a well-thought-out comparative analysis. In this context, stability refers to the ability of these algorithms to reliably reproduce expected outcomes, irrespective of changes in workload, data input, or the state of the system.

A practical methodology for conducting a comparative analysis may involve testing the performance of multiple algorithms across different applications and network topologies.

This strategy would assist in evaluating key aspects such as robustness, convergence rate, and noise tolerance under a range of problem conditions. Following an exhaustive comparative analysis, it is possible to pinpoint the diffusion algorithm that not only performs the best, but also exhibits the highest level of stability across several applications.

Approaches to Stability in Diffusion Algorithms

Deciphering the Importance of Stability in Diffusion Algorithms

The stability of diffusion algorithms has considerable influence on the reliability and precision of the computational results they yield. This factor gauges the extent to which the algorithm’s performance remains consistent in the face of changes to the data structure, or when subjected to various environmental changes.

Conditions for Stability in Diffusion Algorithms

The conditions required for stability depend on the diffusion algorithm type and the nature of the problem. However, some general parameters are common across most diffusion algorithms.

- The sequence of iterations given by a diffusion algorithm should be bounded. It means that as the iterations of the algorithm progress, they should not spiral out of control, but should remain within a certain range.

- All operations within the algorithm should be well-defined and maintain consistency despite changes in input. In other words, the algorithm must guarantee that the same output will be produced for the same data over time.

- The structure of data may undergo changes and yet, the diffusion algorithm must guarantee that the output remains within a defined boundary. This is particularly important when dealing with real-world applications where data sequences can have dynamic structures.

Methods for Assessing Stability

A series of techniques are employed to assess stability in diffusion algorithms, such as the von Neumann analysis that assesses the growth or decay of the algorithm. Similarly, Fourier stability analyses factor in the wave number of the discretization error, allowing researchers to appraise the stability of the algorithm in terms of this wave number, whereas energy stability analyses focus on the conservation of total energy in simulation.

Stability can also be determined empirically by running the diffusion algorithm numerous times with different data sets and observing how the output changes over time.

Putting Stable Diffusion Algorithms into Context

Stable diffusion algorithms are not just theoretical concepts; they influence real-world scenarios in remarkable ways. For instance, these algorithms play a crucial role in heat transfer simulations, with stability ensuring accurate predictions. Without this stability, engineers risk misinterpreting data, leading to misinformed decisions.

Similarly, in the realm of epidemiology, diffusion algorithms assist in modelling and predicting disease spread. Any instability in these algorithms can result in overestimations or underestimations of disease spread, potentially leading to inefficient resource allocation by health authorities.

In the finance sector, stable diffusion algorithms facilitate reliable predictions on stock price movements under various market conditions. Thus, stability in these algorithms becomes a cornerstone for successful financial forecasting.

Indeed, the relevance of stability in diffusion algorithms is not confined to these fields but spans a plethora of areas. Given their impact on diverse sectors, it’s clear why improving the stability of diffusion algorithms, as well as developing methods to evaluate their relative performance, is of utmost importance.

Review of Key Stable Diffusion Algorithms

Diving into the World of Stable Diffusion Algorithms

Notably, stable diffusion algorithms aren’t only essential, but they’re also versatile, offering reliable solutions for estimation in distributed networks, a key aspect in fields such as data analytics, sensor networks, and machine learning. Each stable diffusion algorithm possesses its unique architectural principles and application areas, opening up a multitude of opportunities for research and exploration.

Least-Mean Square (L-MS) Algorithm

One of the simplest types of stable diffusion algorithms is the Least-Mean-Square (L-MS) algorithm. As a first-order iterative optimization algorithm, its key design concept is the minimization of the expected value of the squared error. The L-MS algorithm is effective for applications in adaptive filtering and control systems due to its simplicity and efficiency, although its performance can degrade in the presence of noise.

Adaptive Diffusion Least Mean Square (AD-LMS) Algorithm

Progressing from the simple L-MS, the Adaptive Diffusion Least Mean Square (AD-LMS) algorithm emerges. It aims at exploiting the spatial diversity existing in distributed networks. Compared to the conventional L-MS algorithm, the AD-LMS is more robust towards modeling errors and noise, which boosts its performance. Significant research has been conducted on improving the robustness and speed of convergence of the AD-LMS.

Distributed Stochastic Gradient (DSG) Algorithm

Another algorithm worth noting in this comparative analysis is the Distributed Stochastic Gradient (DSG) algorithm. This algorithm, designed for decentralized optimization, is robust even in the face of non-stationary environments, making it ideal for use in scenarios where data is non-iid or asynchronous.

Normalized Least Mean Square (NLMS) Algorithm

Further, we have the Normalized Least Mean Square (NLMS) algorithm. Its prime advantage lies in its automatic adjustment of the step size parameter, thereby reducing the need for manual tuning. This attribute makes NLMS ideal for applications in echo cancellation and channel equalization.

Incremental Least Mean Square (inc-LMS) Algorithm

Finally, the Incremental Least Mean Square (inc-LMS) algorithm offers an advancement over the traditional LMS algorithm. The algorithm uses a cyclic order of the data instances to address the changing nature of the data, reducing the possibility of divergence. The inc-LMS algorithm has found meaningful applications in adaptive beamforming and power system state estimation.

Whether it be in terms of unique design principles or application varieties, each stable diffusion algorithm holds its own. However, the value of their comparative analysis lies in its ability to highlight their respective strengths and weaknesses across a wide range of scenarios. It’s important to note that continuous progress in research ensures that these algorithms are regularly updated, with the goal of creating more robust and efficient models for use across distributed networks.

Photo by thejohnnyme on Unsplash

Comparative Analysis of Stable Diffusion Algorithms

Digging Deeper into Stable Diffusion Algorithms

Stable diffusion algorithms play a significant role in the transportation and distribution of data across various networks. They provide a solution to the challenge of avoiding possible node or system overload brought on by intense pressure, thereby paving the way for uninterrupted network communication.

Types of Stable Diffusion Algorithms

Some common stable diffusion algorithms include Congestion Control Algorithms, Random Early Detection, Weighted Random Early Detection, and Queuing Disciplines. Each plays a unique role in managing data flow and stabilizing the system by adjusting rates and factors accordingly. For instance, Random Early Detection anticipates queue congestion and acts preemptively to avoid overloading, whereas Weighted Random Early Detection gives precedence to specific traffic over other, delaying some in the process.

Efficiency of Stable Diffusion Algorithms

In terms of efficiency, algorithms vary based on their unique functions. For instance, congestion control algorithms are more focused on prevention than management. They work by reducing the rate of sending packets when the congestion is detected, hence, ensuring the network is efficient in its operation. On the other hand, protocols like Random Early Detection operate on prediction mechanisms, making them more efficient when it comes to anticipating congestion and acting before an overload occurs.

Stability of Stable Diffusion Algorithms

The stability of diffusion algorithms is crucial in maintaining the smooth operation of a network. Congestion Control Algorithms maintain stability by dynamically adjusting the sending rate of packets based on the congestion detected. Similarly, Queuing Disciplines algorithms maintain stability by managing the storage of the packets and ensuring each packet is efficiently processed before moving on to the next.

Complexity of Stable Diffusion Algorithms

The complexity of stable diffusion algorithms is determined by the intricacy of their operation. While an algorithm like Congestion Control might be relatively straightforward since it operates on simple principles of reducing packet transmission during congestion, algorithms like Weighted Random Early Detection might be more complex, as they involve considerations of factors like weight, priorities, and randomness in their operation.

Application Scope of Stable Diffusion Algorithms

The application scope of these algorithms is extensive, covering different networks and traffic types. Congestion Control algorithms are applicable in virtually every networking system due to their straightforward approach to monitoring and reducing congestion. Similarly, Random Early Detection and Weighted Random Early Detection algorithms, due to their predictive mechanism, are useful in applications where congestion anticipation and traffic management are critical, like in media streaming or video conferencing.

A Detailed Comparison of Stable Diffusion Algorithms

To cater to varying needs, there are numerous stable diffusion algorithms, each with their unique strengths. For general networking system applications, congestion control algorithms are an ideal choice due to their robustness and reliability.

On the other hand, when precision and sophisticated traffic management come into play, the Weighted Random Early Detection algorithm might be the better option. The decision on which stable diffusion algorithm to utilize greatly depends on the particular requirements and limitations of the network at hand. Thus, it becomes incredibly vital to gain a thorough understanding of each algorithm, including its advantages and drawbacks, to enable effective and efficient network management.

Trends and Future Perspectives on Stable Diffusion Algorithms

An In-Depth Look at Stable Diffusion Algorithms

As fundamental components of many computer science processes, stable diffusion algorithms contribute significantly to the development of more efficient machine learning and data processing methods. These algorithms systematically spread or distribute information across a network, employing a series of specific rules to control the diffusion process.

This model is underpinned by mathematical diffusion principles and utilizes various error-checking techniques to uphold reliable performance. It guarantees that the outputted solution aligns with the expected results. With precision and predictability at their core, these algorithms ensure stability, thereby enhancing algorithmic performance, increasing processing speed, and improving scalability.

Comparative Analysis of Stable Diffusion Algorithms

Numerous stable diffusion algorithms exist, each with its unique strengths and weaknesses. Their comparative analysis allows researchers to identify key differences and similarities, offering insights into their performance under varying conditions.

Some algorithms may excel in running complex tasks, while others may provide better solutions for data-heavy operations. Specialized diffusion algorithms cater to different areas like image processing, optimization problems, and machine learning applications. Consequently, this comparison offers enthusiasts an understanding of which algorithms function better under specific situations.

Current Trends in Stable Diffusion Algorithms

Despite their proven efficacy, constant advancements in technology are fueling the need for even better stable diffusion algorithms. Today, researchers are developing cutting-edge algorithms that take advantage of faster computing power, better memory management, and more sophisticated software systems.

There is a particular emphasis on designing models that can handle more complex, data-intensive tasks, especially in machine learning and artificial intelligence fields. Researchers are also focusing on enhancing algorithms’ robustness to handle unexpected mishaps, such as data errors or system failures, without compromising stability.

The Future of Stable Diffusion Algorithms

Emerging technologies and theories are intensifying the momentum for further innovations in stable diffusion algorithms. High on the list of many researchers’ agendas is creating algorithms that can learn and improve over time, better predictive models, and those that can autonomously repair when they encounter errors.

One area of keen interest is quantum computing: the potential to design quantum diffusion algorithms that could perform calculations beyond the capabilities of classical computers. As this technology matures, it could revolutionize the way we use algorithms, offering unprecedented speed and computing power.

Challenges in Developing Stable Diffusion Algorithms

Designing and implementing stable diffusion algorithms is not without its challenges. Top on the list is achieving stability in various conditions: an algorithm must operate correctly, even under strain, and continue providing accurate results over time.

Algorithmic complexity is another concern, particularly as tasks become more complicated and data-heavy. As technology continues to evolve, the need for algorithms to adapt and grow cannot be overemphasized. Likewise, considering security implications becomes crucial, as security breaches or unauthorized data access can disrupt the stability of these algorithms.

Impact of Research on Future Algorithm Development

The current state of research in stable diffusion algorithms promises a sea of possibilities for their future integration into systems. Researchers are gaining a deeper understanding of these algorithms, paving the way for more advanced applications.

These new developments could significantly transform various industries, leading to exponential growth in AI, data processing, and machine learning applications. As such, the comparative analysis of stable diffusion algorithms will remain a vital tool in gaining insights into their usability, functionality, and overall performance.

Fueled by relentless innovation and a deeper understanding of technological possibilities, the realm of stable diffusion algorithms promises to become more sophisticated, versatile, and impactful over time. This comparative analysis corroborates the diverse strengths and nuances of different diffusion algorithms, all affirming the profound role stability plays in their efficacy.

As we delve in-depth into each system, it becomes evident that algorithmic performance is shaped by numerous intersecting factors. With the rapid advancement of technologies, the future holds immense opportunities for the growth and evolution of stable diffusion algorithms.

They will continue to underpin numerous applications, fostering a world where efficiency, stability, and precision are paramount.

Emad Morpheus is a tech enthusiast with a unique flair for AI and art. Backed by a Computer Science background, he dove into the captivating world of AI-driven image generation five years ago. Since then, he has been honing his skills and sharing his insights on AI art creation through his blog posts. Outside his tech-art sphere, Emad enjoys photography, hiking, and piano.